Creating Kubernetes Clusters with Terraform: Learning Provisioners

This is the last lesson of the Terraform Lightning course.

There is just one more topic we should talk about and it is server provisioners.

As we've discussed in the first video of the course, Terraform was not made to be a configuration management tool, like Puppet or Chef.

But most likely you are still going to create servers with Terraform and these servers still need some kind of configuration.

There are many ways to bootstrap a server, including cloud native tools like ignition or even going for immutable infrastructure and roll out servers from pre-created machine images.

In this article, I want to focus on the most traditional way to bootstrap a server: by connecting it via SSH and running some automation scripts.

To make this lesson a bit more fun and to drop some fancy technologies on top, I am going to bootstrap the complete Kubernetes clusters with the help of Terraform. I am going to use a certified Kubernetes distribution called "k3s".

In short, k3s is a slimmed down, low footprint Kubernetes distribution, created for internet of things and edge computing use cases. We will take a closer look at k3s in another video on mkdev channel.

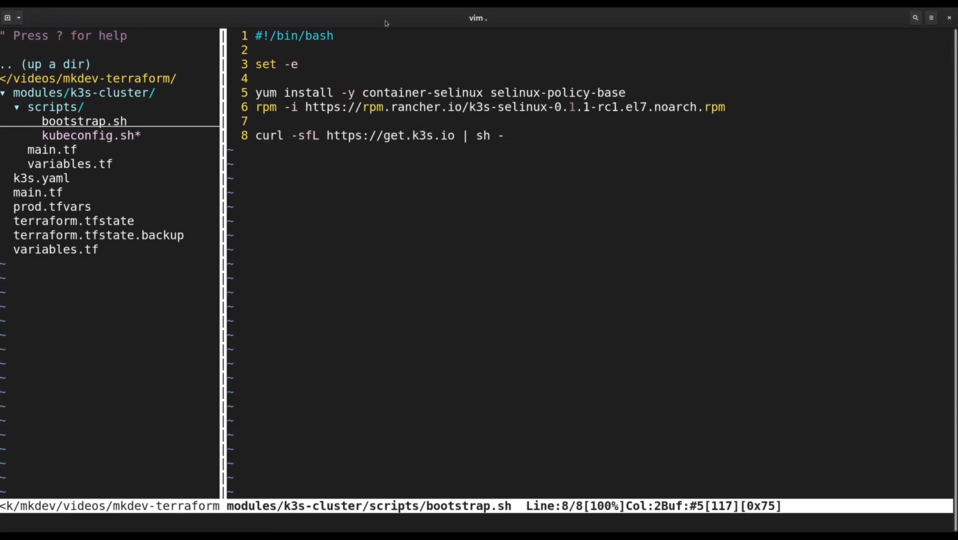

To bootstrap the server, we need a script. Terraform supports provisioning with Puppet, Chef and few other tools, but we are going to stick with the good old shell script.

I've prepared the script in advance. What it does is it installs a couple of packages and then runs an official k3s installation script.

Now let's go to our k3s cluster Terraform module.

provisioner "remote-exec" { PAUSE

script = "${path.module}/scripts/bootstrap.sh" PAUSE

PAUSE

connection {

user = "root"

host = "${self.access_public_ipv4}"

}

}

PAUSE

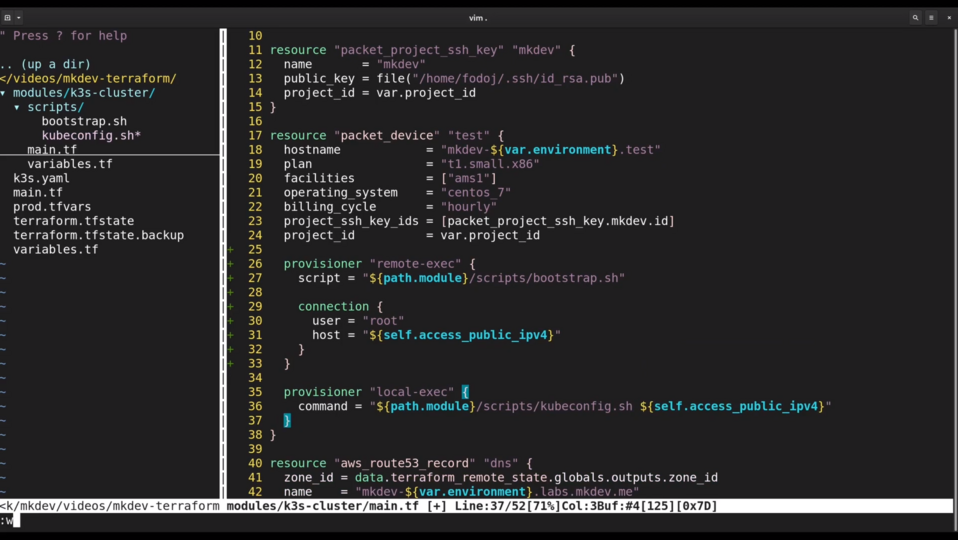

provisioner "local-exec" {

command = "${path.module}/scripts/kubeconfig.sh ${self.access_public_ipv4}"

}

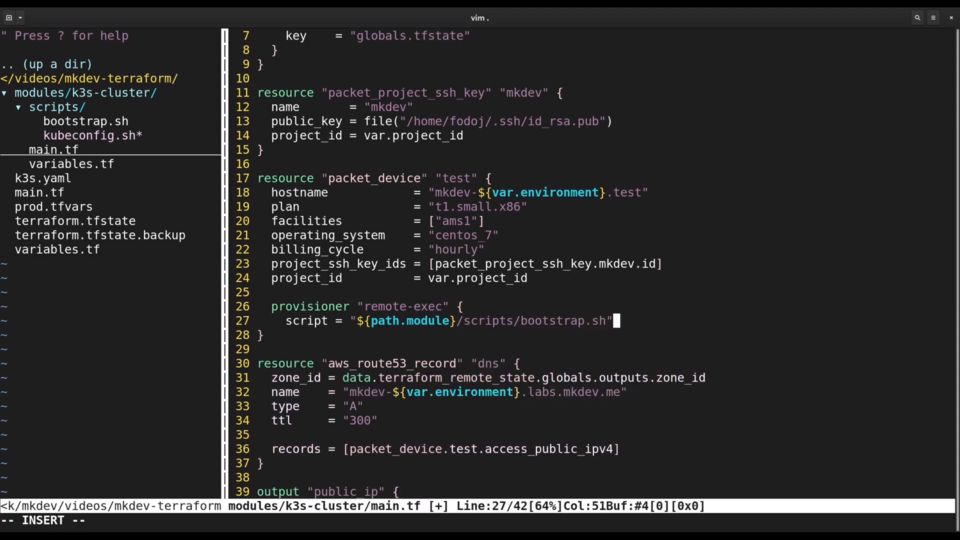

To provision the server we only need to add the provisioner block with the type remote_exec inside our Packet Device resource.

Inside the provisioner block, I am going to set the script parameter to reference the shell script we just saw. Terraform will copy this script to the server and then execute it.

Note how I use a special path.module construct so that Terraform searches for the script in a path relative to the module directory.

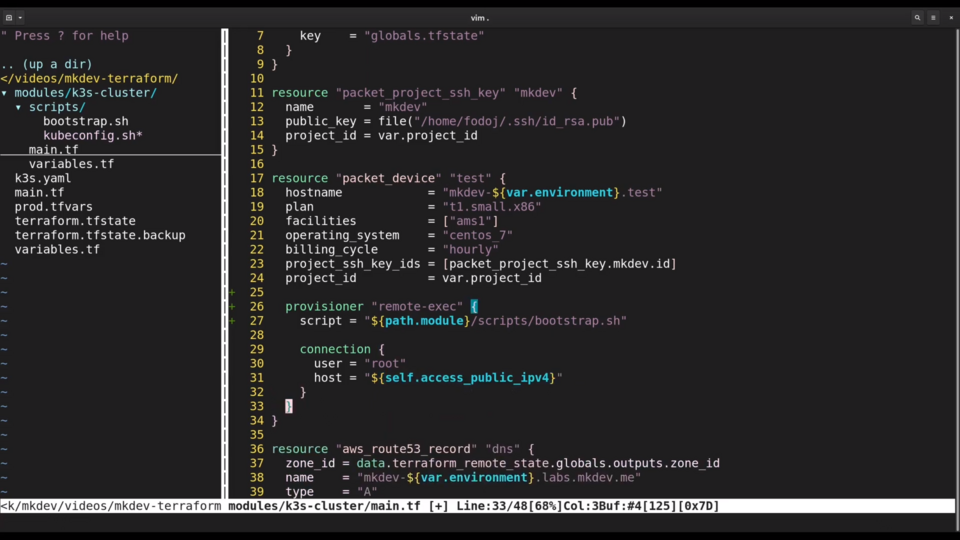

I also need to create a connection block inside the provisioner, specifying the ip address and the username.

Another special construct here is self - this one allows us to reference the attributes of the resource inside the resource declaration itself.

In addition to remote-exec provisioner, I will also add a local-exec provisioner. Unlike remote-exec, local-exec script is executed on the same machine where we run Terraform commands.

The script itself will simply copy the default kubeconfig from the server to the local machine. This is needed so that we can verify that our Kubernetes cluster is up and running. Let's give it a try.

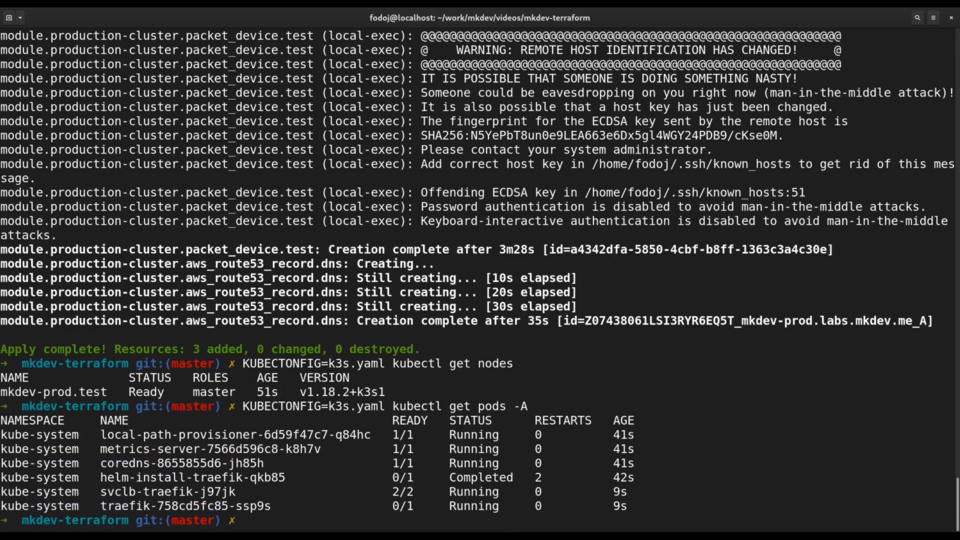

It takes few minutes for server to be created and for the ssh connection to become available.

After it is available, we can see the log of the provisioner script right here, in Terraform output.

The installation of k3s is a relatively quick process... And now we have a kubeconfig and we can verify the cluster.

Let me run simple kubectl command to get the cluster nodes. Seems like we have a new single-node Kubernetes cluster!

Using remote-exec and local-exec provisioners is the simplest way to provision the servers created with Terraform. As I mentioned already, there are better ways to do it, but describing them is outside of the scope of the Terraform lightning course. If you'd like to learn more, please tell us in the comments section.

As for the Terraform Lightning Course, we are done.

By this time, you should have a good understanding of what Terraform is, what the main ideas behind it are and when and how you should use it.

Here's a link to a Github project with the complete code for this course. I've added tags for each part of the course, so you can quickly switch beween different topics we've covered.

Terraform has a bit more features and you should watch our Terraform Tips and Tricks series if you want to learn about them.

As this was mostly the beginners course, we certainly didn't cover many advanced topics, like using Terraform in a team and adapting Terraform company wide and at scale. We also did not discuss things like testing and continuous deployment of your infrastructure code.

Those topics depend on your particular organization, team and project. We at mkdev will be happy to help you with the introduction of infrastructure as code practices and tools to your organization. Contact us for the trainings and consulting.

DevOps consulting: DevOps is a cultural and technological journey. We'll be thrilled to be your guides on any part of this journey. About consulting

Here's the same article in video form for your convenience:

Article Series "Terraform Lightning Course"

- Infrastructure as Code and How Terraform Fits Into It

- Terraform Fundamentals: State Management and Dependency Graph, Creating the First Server

- Configuring Terraform Templates: Variables and Data Resources

- Terraform Tips & Tricks, Issue 1: Format, Graph and State

- Creating Multi-cloud Terraform environment with the help of remote state backends and AWS S3

- Refactor Terraform code with Modules

- Creating Kubernetes Clusters with Terraform: Learning Provisioners

- Terraform Tips & Tricks, Issue 2: Registry, Locals and Workspaces