Refactor Terraform code with Modules

This is the fifth lesson of the Terraform Lightning course.

Today we are going to talk about modules.

Modules in Terraform allow you to group resources in some kind of re-usable packages.

Just like the main Terraform template, each module can be configured with variables and can return some outputs.

Modules are a great way to reduce the duplication in your code and automate your organization's best practices.

They allow you to follow an important programming practice: "DRY", abbrevation from "Don't repeat yourself".

If you always copy and paste same code and do slight modifications to each, you will end up in a mess. So whenever you notice the duplication, you should consider refactoring the code into something more maintaintable and re-usable.

Let's see how to use the Terraform modules.

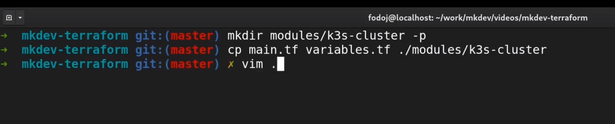

I am going to create a new directory called "modules". Inside this directory, I am going to create another one, called "k3s-cluster".

mkdir modules/k3-cluster -p

Don't worry if you don't know what k3s is - there will be a separate article about k3s. You don't have to know it to proceed with this course.

Next thing I am going to do is I will copy our variables and main template file to the module directory.

Let's start by modifying the module template.

Our module, for now, will do exactly the same as before: it will create a new server and a DNS record for this server. We don't need the module to configure the provider or to pull a project, so I am removing these parts.

Instead of using the data resource for the project, I will use a new variable for this.

I am also removing most of the variables from variables.tf file and adding a new one, for the project id.

Now let's head to our main template. First, I am going to remove packet device and route53 record resources, as well as the output.

Next, I am going to include the module. To do this, I am typing the module keyword followed by the name for this instance of the module.

Inside the module I need to specify the source of the module. This could be a remote git repository, a remote file or, in our case, a local directory.

All other parameters I am setting are basically the values for the variables of the module. I am setting the value of environment variable and the project id, pulled via the data resource.

To make things more interesting, I am going to duplicate the whole module definition and create, let's say, staging server.

Let me change the variables.tf file to remove the environment variable, as we don't use it anymore. I will also remove it from tfvars file.

If I will try to run terraform apply, I will get an error saying that module is not initialized. Even though it's just a local module, Terraform still wants us to initialize it, so let me run "terraform init".

And now terraform apply again.

I have to set AWS_REGION environment variable, because in this case Terraform won't ask for it interactively.

Let's examine the result of the command:

Terraform will create 6 resources, 3 for each module. It will nest all module resources inside module dot module name path.

With this module, we are able to provision new servers without duplicating the code too much. The server provisioning is now standardized by the module. It follows the naming conventions, it creates all the dependencies needed for the server and it provides a simple interface, via variables, to configure the resources inside the module.

DevOps consulting: DevOps is a cultural and technological journey. We'll be thrilled to be your guides on any part of this journey. About consulting

Here's the same article in video form for your convenience:

Article Series "Terraform Lightning Course"

- Infrastructure as Code and How Terraform Fits Into It

- Terraform Fundamentals: State Management and Dependency Graph, Creating the First Server

- Configuring Terraform Templates: Variables and Data Resources

- Terraform Tips & Tricks, Issue 1: Format, Graph and State

- Creating Multi-cloud Terraform environment with the help of remote state backends and AWS S3

- Refactor Terraform code with Modules

- Creating Kubernetes Clusters with Terraform: Learning Provisioners

- Terraform Tips & Tricks, Issue 2: Registry, Locals and Workspaces